AI Demands a Fresh Look at System Architecture

Engineers are rethinking system architecture to accommodate increasing data rates and performance demands. Emerging technologies like co-packaged optics and OSFP-XD pluggables promise higher bandwidth and improved efficiency.

Unless you have spent the last two years living in a remote cave in the Himalayas, the emergence of artificial intelligence has likely caught your attention. There is little doubt that AI will be a major disrupting technology; it has already begun toimpact everything from how new drugs are discovered to reconsideration of traditional career paths. The unprecedented rate of advances in AI technology staggers the imagination and makes comparison to past breakthrough innovations difficult. Not only is the technology evolving at an incredible rate, but broad adoption and integration to solve real world challenges is experiencing “flash growth.” It has been estimated that it took seven years for the Internet to attract 100 million users. Chat GPT registered 100 million users in the first two months after it launched.

Data centers are the core of AI resources and they are under pressure to dramatically expand bandwidth and storage capacity while reducing power consumption. Traditional racks of servers linked with copper cables are being replaced by AI processing clusters incorporating thousands of CPUs and GPUs interconnected with fiber optic cables that can deliver higher data rates at longer distances with lower distortion. Advanced chip technology and emerging silicon photonics can process data faster than traditional input/output channels can support. This has resulted in I/O channels becoming a data bottleneck, putting pressure on designers to find new ways to deal with terabit data streams.

At the core of a high-speed network is the switch, which links servers, storage, and other resources, enabling them to communicate. The performance of the switch and associated interconnects are key factors that determine the bandwidth of the network.

Next-generation switches set the pace of system performance and have made the challenge of keeping up with the I/O speed of the switch an imperative. Starting in 2010, 640 Gb/s switch speeds have been doubling nearly every two years. The Tomahawk 5 switch chip from Broadcom, in production now, delivers 51.2 terabits of switching capacity. Next-generation 102.4 Tb switches will likely require 200 Gb per lane technology and are expected to enter the market within the next two years. In addition to I/O panel density, designers of next-generation systems are facing challenges including thermal management, power reduction, and latency while increasing performance.

Connecting the switch chip directly to the PCB with copper traces leading to panel mounted I/O connectors faces severe limitations of signal degradation due to the transmission characteristics of high-speed signals in even the most advanced PCB laminate materials.

Several alternative solutions have been developed.

Near-chip architecture transitions the signals out of the PCB to low-loss twinaxial cables terminated immediately adjacent to the chip. These cables are directly connected to pluggable transceivers mounted on the chassis I/O pane, avoiding PCB losses.

The near-chip solution begins to face panel density challenges as we approach the signal capacity limits of QSFP-DD and OSFP pluggable transceivers. Increasing the bandwidth of each channel from current 100 Gb/s to 200 Gb/s is a solution for at least the next generation of switches.

System design engineers often prefer to extend familiar technology rather than introduce a new architecture that could increase risk. The OSFP-XD pluggable transceiver as defined by the OSFP 1600G specification doubles the number of electrical lanes from 8 to 16, supporting both 8 x 100 Gb/s and 8 x 200 Gb/s host interfaces and enabling up to 3.2 Tb. throughput. The OSFP-XD power profile limits power consumption to less than 25 watts. Thermal management has become a key factor in system design. Current OSFP-XD pluggables are designed for circulating air cooling but can be adapted to cool-plate cooling if required.

The XD pluggable transceiver is somewhat longer and slightly wider than the OSFP and QSFP-DD profiles, trading increased capacity for I/O panel space. Although not backward compatible, the evolution of the OSFP pluggable transceiver to the OSFP-XD (extra density) transceiver retains a similar form factor.

Design engineers must make trade-offs between packaging density and performance.

Because they are longer and wider than standard OSFP and QSFP-DD pluggables, OSFP-XD transceivers occupy slightly more PCB real estate and are not backward compatible with standard OSFP connectors.

OSFP-XD pluggables are focused on supporting 51.2 Tb switches as well as next-generation 102.4 Tb chips. Although unproven at this point, OSFP-XD connectors may be capable of supporting next-generation 204.8 Tb ASICS.

A second alternative is packaging a system using co-packaged optics which replaces traditional copper channels with optical links that offer higher bandwidth and greater I/O density.

The switch and a series of optical engines are mounted on a substrate. The electrical path length from switch to optical engine is extremely short, reducing copper losses to a minimum. Converting signals from electrical to optical greatly extends reach and reduces power consumption by as much as 30%.

Compared to OSFP or QSFP-DD pluggables, terminating optical fibers with multi-position small profile optical connectors provides greatly increased signal density at the I/O panel. By one estimate, a 51.2 Tb switch could require sixteen 3.2 Tb optical engines each of which generate 64 fibers. The fiber count at a server I/O panel could total 1,024 fibers requiring termination on a standard 1.75″ by 19″ 1RU panel. Expected advances in AI will demand future systems to support 102.4 Tb (32 x 3200G) ports, a density unlikely to be achieved using pluggable transceivers. Thermal considerations have become a limiting factor in new system design, increasing the likelihood that advanced systems will be forced to abandon 1RU chassis and adopt larger 1.5 or 2RU enclosures.

CPO architecture has been a subject of intense debate, as it requires a significant change in the way high-performance systems are designed. The advantages of conventional interconnect using pluggable QSFP-DD or OSFP connectors include an established ecosystem of global suppliers, easily hot swappable modules, field repairability, and familiar manufacturing processes. Pluggables can support both copper and fiber. CPO requires commitment to optical I/O.

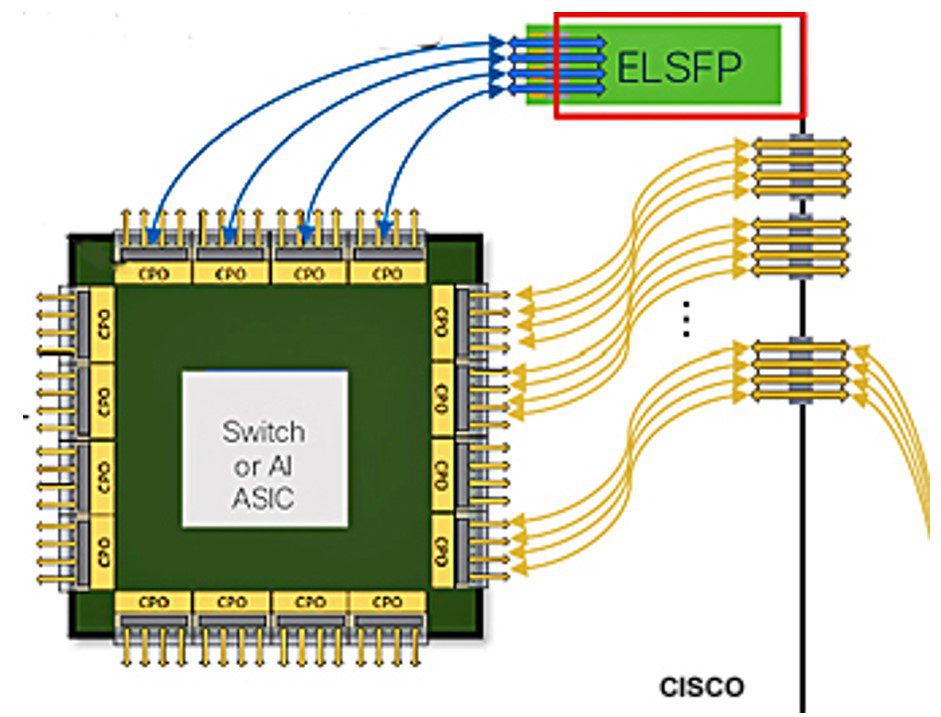

The Optical Internetworking Forum (OIF) has been an active proponent of CPO architecture and has published implementation agreements for a 3.2 Tb/s transceiver module and an external small form-factor pluggable laser (ELSFP).

CPO is a relatively new technology with limited volume production experience. It currently lacks a broad tooled global vendor base and raises questions about fiber management inside the box, system reliability, and field serviceability. A documented test protocol is yet to be established. The external laser is the current location of choice today, but next generations may want to locate the laser on the switch substrate, which raises thermal management issues and may require the introduction of liquid cooling.

OSFP-XD could be the choice for next-generation 102.4 Tb switch applications to minimize risk. By the time 204.8 Tb switches become available, the current unresolved issues surrounding co-packaged optics will be settled and CPO using advanced silicon photonics will likely become the preferred option.

A key challenge of all new system design is a mandate to reduce power consumption. Both OSFP-XD and CPO claim to support this objective, but they are not the only technologies being developed to achieve greater system efficiency. Continuing increases in chip density will pack transistors closer together, reducing the power required to communicate. As chip dimensions decrease from current 100G at 5 nm, to 200G at 2 nm, power consumption is projected to decrease as much as 56%.

The rise of interest in linear pluggable optics (LPO) stems from potential reduced power consumption resulting from the elimination of digital signal processor (DSP) chips from pluggable transceivers. Reduced latency and cost provide additional incentives. Intense investigation by the LPO MSA will reveal if retiming chips can be dropped from one or both sides of a pluggable interface while maintaining performance. The resulting specification will define module and network requirements to achieve interoperability for both electrical and optical elements. The objective is to create a verified multi-source supply ecosystem. A linear pluggable optic version of OSFP-XD is a likely extension of the current interface.

It is unclear if and when these technologies will be designed into next-generation devices, but they offer potential solutions to problems that could limit the performance of systems already on the roadmap.

Like this article? Check out our other RF and Coax, High-Speed articles, our Datacom Market Page, and our 2024 Article Archive.

Subscribe to our weekly e-newsletters, follow us on LinkedIn, Twitter, and Facebook, and check out our eBook archives for more applicable, expert-informed connectivity content.

- OFC 2026: High-Speed Networking in the AI Era - April 7, 2026

- DesignCon 2026 - March 17, 2026

- Breaking Through the AI Power Wall - January 20, 2026