New Connectivity Solutions for the AI Data Center

Advanced connectivity solutions are emerging to support new AI data center architectures. High-speed board-to-board connectors, next-generation cables, backplanes, and near-ASIC connector-to-cable solutions operating at speeds up to 224 Gb/s-PAM4 will accelerate the future of computing.

By Wai Kiong Poon, Senior Global Product Manager for Mirror Mezz, Molex

Artificial intelligence (AI) and machine learning (ML) applications are creating unprecedented demands for complex computational power, massive storage capacity, and seamless connectivity. High-speed, efficient communications between electronic components enable AI data centers to calculate colossal datasets and produce real-time responses in the form of text, video, audio, graphics, and more.

To keep pace with accelerating performance requirements, AI data center architects must scale their system fabrics to support data transmission rates of 224 Gb/s using PAM4 modulation. This scheme places the burden on leading-edge interconnects to provide new strategies for routing, space efficiency, and power management.

Advanced connectivity solutions, including high-speed board-to-board connectors, next-generation cables, backplanes, and near-ASIC connector-to-cable solutions operating at speeds up to 224 Gb/s-PAM4 are critical to new data center designs.

Advanced connectivity solutions, including high-speed board-to-board connectors, next-generation cables, backplanes, and near-ASIC connector-to-cable solutions operating at speeds up to 224 Gb/s-PAM4 are critical to new data center designs.

New architectural innovations must surpass daunting performance hurdles. While 112 Gb/s-PAM4 signaling technology was considered a major technological breakthrough, achieving 224 Gb/s requires much more than simply “doubling the bandwidth.” Systems designed for data transfer rates at 224 Gb/s introduce major physical challenges, from the need to maintain signal integrity and contain electromagnetic interference (EMI) reduction to more powerful thermal management strategies.

Equally problematic is the continuous demand to elevate performance while squeezing more capacity and connectivity into shrinking spaces. As a result, it is not a question of simply upgrading underlying compute and connectivity capabilities to support 224 Gb/s rates, but rather how best to redesign an AI data center with 224G technology innovations. This requires new levels of industry collaboration and co-development across the ecosystem to ensure interoperability between components, hardware, architecture, connectivity, mechanical integrity, and signal integrity to address the physics of the electrical channel and engineer superior mechanical solutions.

Designing — or redesigning — solutions for 224 Gb/s requires seamless electrical connections between all components to prevent performance bottlenecks. A co-development mindset is crucial to creating a transparent methodology for identifying and resolving signal integrity and other performance pitfalls.

High-speed board-to-board connectivity

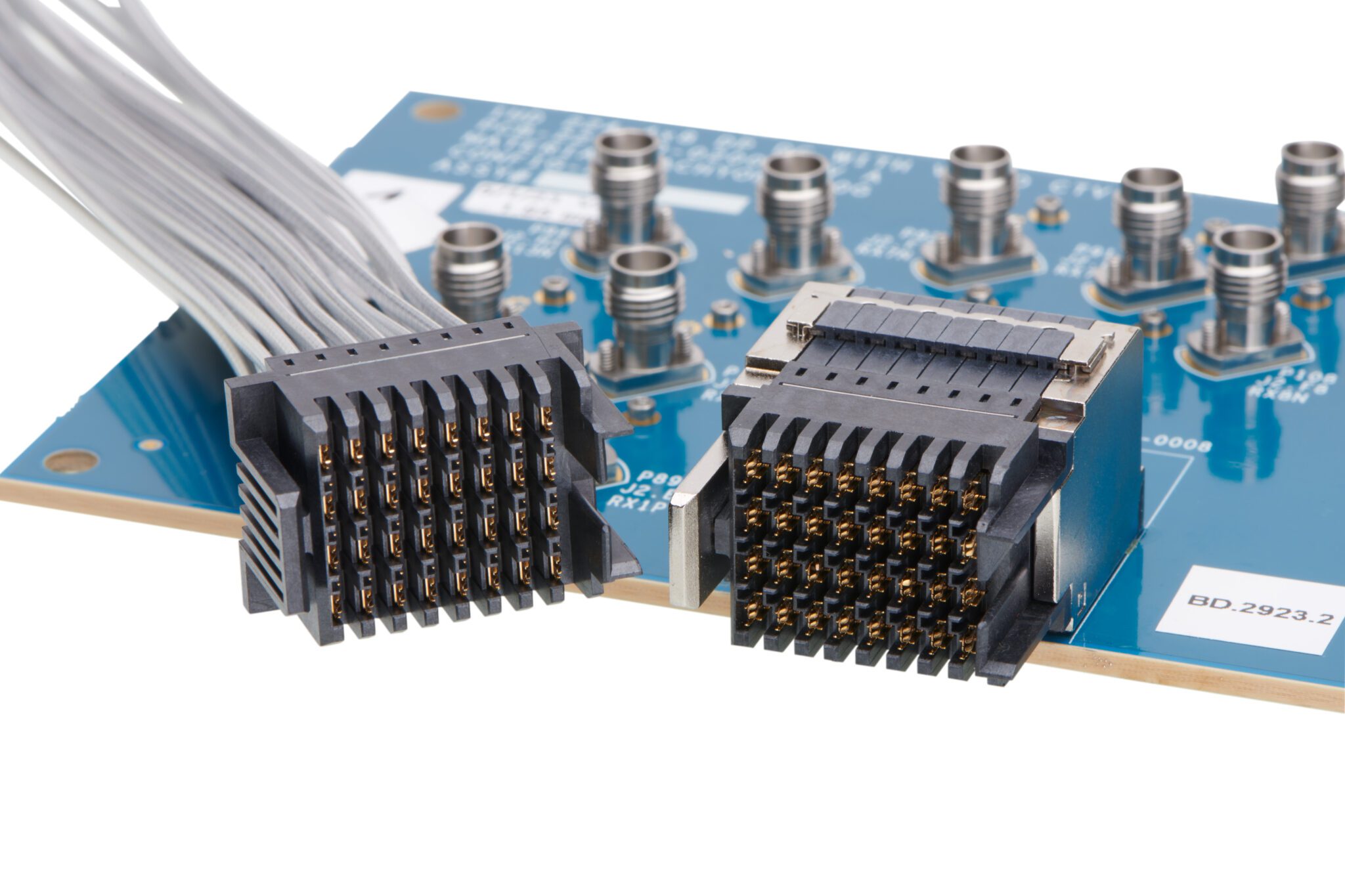

By enabling rapid data exchange between GPUs, accelerators, and other components, high-speed board-to-board connectors contribute significantly to the efficiency of AI data centers. These connectors provide uninterrupted communications between processors, memory modules, and other critical components and must be resilient enough to withstand environmental stresses, such as vibration, temperature fluctuations, shock, and physical handling.

The ability to support high data transfer rates is instrumental in achieving the performance levels required for complex computations and data processing tasks. Moreover, connectors’ high data transfer rates are especially beneficial in training and inference tasks, where timely processing of data is crucial for the performance of AI algorithms.

High-speed board-to-board connectors must be designed with a focus on signal integrity to transmit data accurately and free of distortion. Reliability is crucial in applications where data accuracy is non-negotiable, such as those involving medical devices and aerospace systems.

These connectors support varying stack heights, providing engineers with greater design freedom to address specific AI application requirements, especially where space is at a premium. For instance, mixing and matching 2.5 mm and 5.5 mm connectors enables engineers to fine-tune connector stack height for optimal performance. Dense connector configurations save valuable space on printed circuit boards (PCBs).

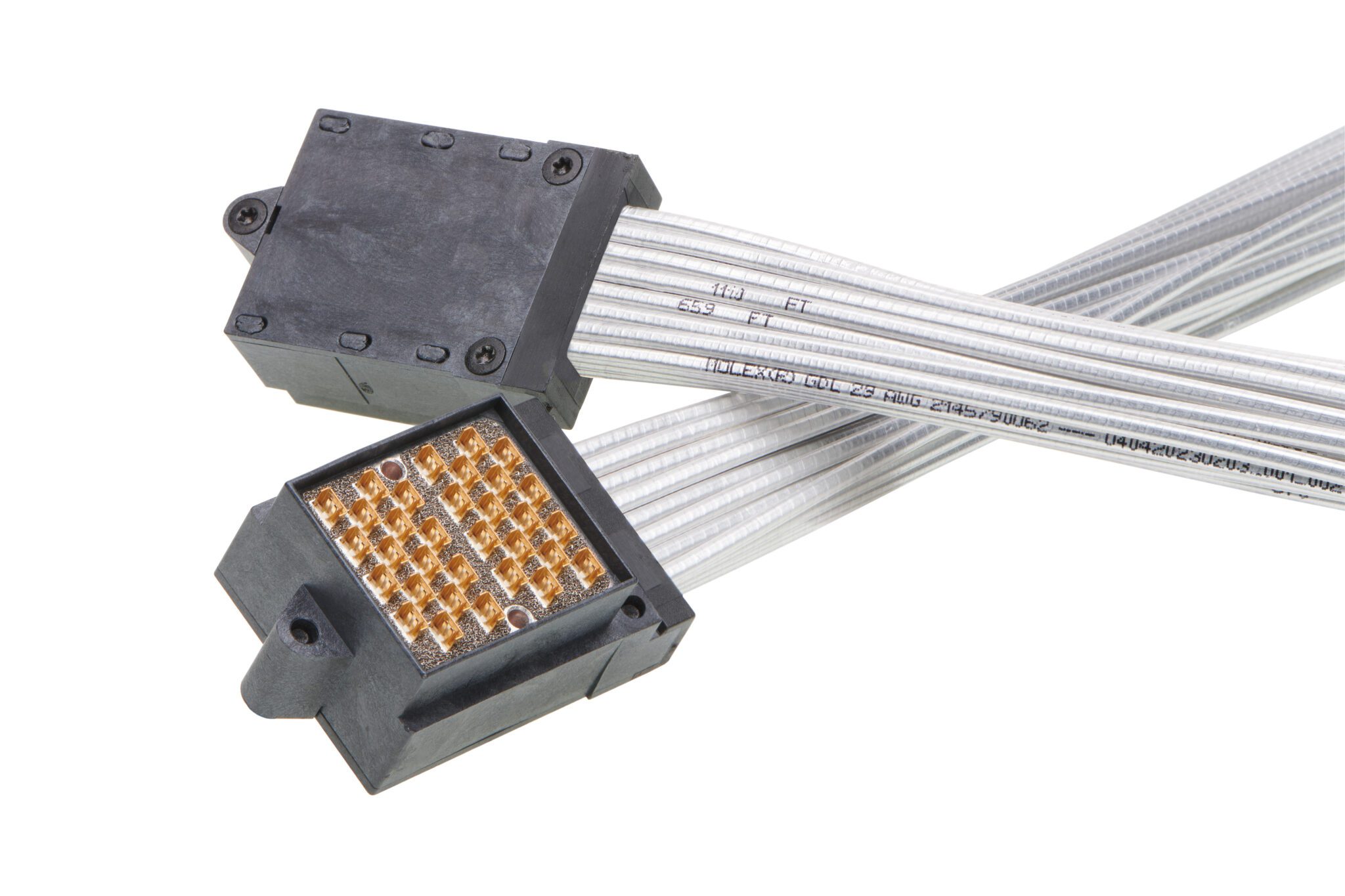

Molex’s Mirror Mezz Enhanced high-speed, mezzanine board-to-board connectors. improve impedance tolerance, reduce crosstalk, maintain industry-leading density, and support 224 Gb/s-PAM4. These interchangeable, hermaphroditic connectors cross mate to create ultra-low and medium stack heights of 5 mm, 8 mm, and 11 mm. Two connectors can be combined to create three different configurations within the same footprint, while traditional connector designs require six connectors. To ensure reliable performance at higher speeds, Molex optimized the terminal structure with staggered pin placement and upgraded the manufacturing process to insert molding to achieve the precise placement and tolerance needed for higher performance.

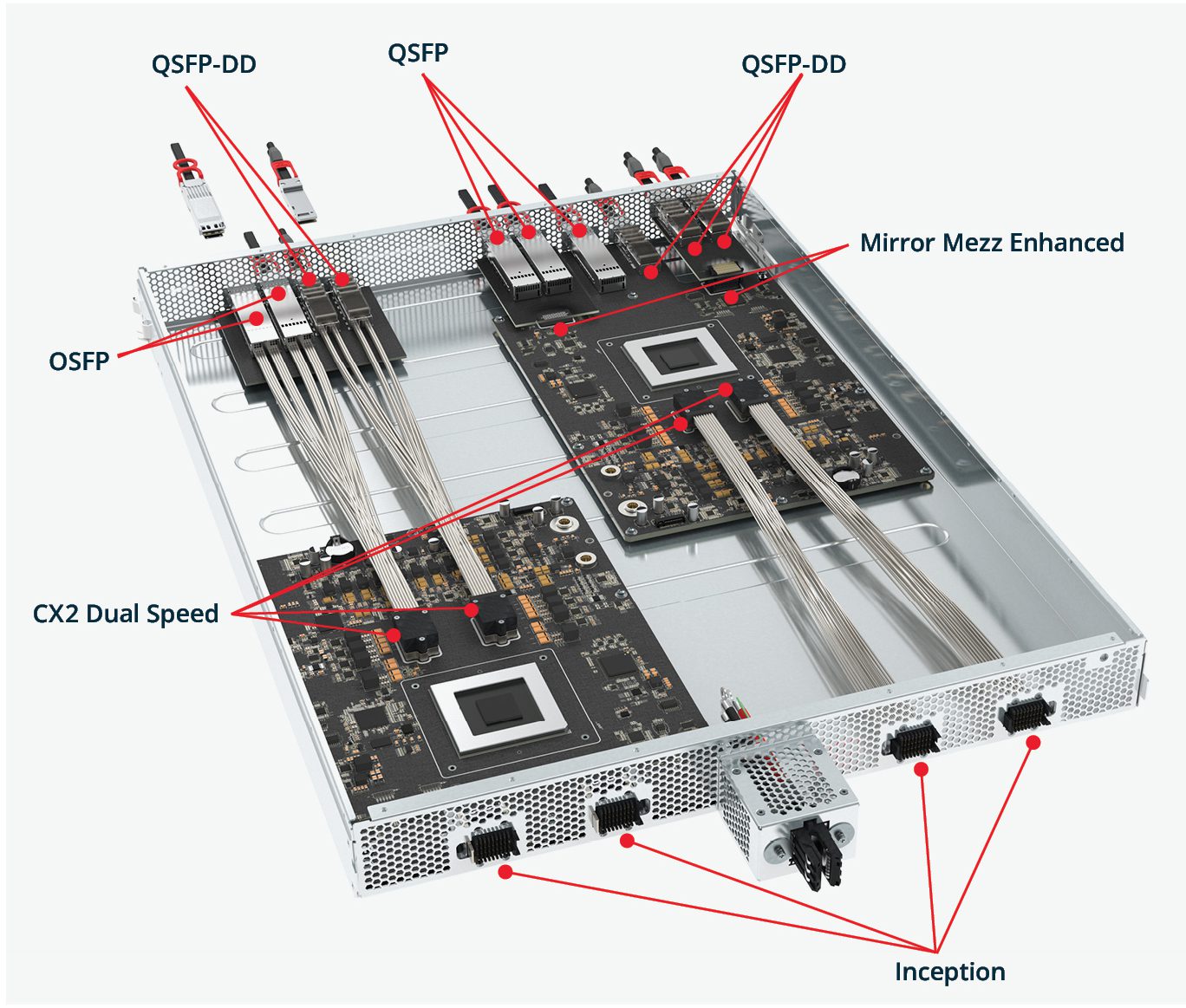

Mirror Mezz Enhanced joins an end-to-end line of innovative 224G solutions from Molex, along with the Inception genderless backplane system, CX2 Dual Speed near-ASIC connector-to-cable system, OSFP 1600 I/O Solutions, and QSFP 800 and QSFP-DD 1600 I/O Solutions.

Inception, designed from a cable-first perspective, offers greater application flexibility with support for variable pitch densities, optimal signal integrity and easy integration with multiple system architectures.

CX2 Dual Speed is Molex’s 224 Gb/s-PAM4 near-ASIC connector-to-cable system that provides screw engagement after mating, an integrated strain-relief feature, reliable mechanical wipe and fully protected “thumb proof” mating interface to boost long-term reliability.

Rounding out the portfolio are OSFP 1600 Solutions, including SMT connector and cage, BiPass, as well as direct attach cable (DAC) and active electrical cable (AEC) solutions built for 224 Gb/s PAM4 per lane or aggregate speed of 1.6T per connector. Additionally, QSFP 800 and QSFP-DD 1600 solutions provide upgraded capabilities for SMT connector and cage, BiPass, along with DAC and AEC solutions for 224 Gb/s-PAM per lane or aggregate speed of 1.5T per connector.

Together, this family of high-speed connectors enables data transfer capacity that will empower customers to keep up with the demands of data-intensive applications, facilitating smooth and rapid communications between components. The result is a box-to-box scale-up capability that enables data center architects to interconnect multiple chassis through two-connector cable backplanes, three-connector and four-connector systems with each segment optimized for speed and mechanical robustness.

The evolution of high-speed connectors will deliver future innovations focused on further miniaturization, increased data transfer rates, and enhanced power efficiency. Keeping pace with these trends will be crucial for industries that rely on high-performance computing and AI data centers.

To learn more about solutions for AI and high-performance computing, visit Molex.

Like this article? Check out our other Artificial Intelligence, Data Centers articles, our Datacom Market Page, and our 2024 Article Archive.

Subscribe to our weekly e-newsletters, follow us on LinkedIn, Twitter, and Facebook, and check out our eBook archives for more applicable, expert-informed connectivity content.