Connectors Contribute to Energy Reduction in the Data Center

Much of the computing equipment world — and particularly data centers — dedicated to artificial intelligence workloads have a problem. They eat too much electrical energy, generate too much heat, and consume inordinate amounts of water. Communities that once welcomed the construction of a data center now question whether the local infrastructure can support the enormous new drain on their resources. The dynamic workloads of an AI data center can rapidly fluctuate, putting stress on the local grid, threatening reliability. Designers of AI computing clusters are struggling to find ways to keep components below critical temperatures. This problem did not suddenly appear; it has been gaining in importance for years.

Leading edge components have been driving the trend to higher energy consumption. Advanced switches, for example, have been on a roadmap to higher performance but are also responsible for a 22X increase in power consumption over a 10-year period.

Speeds have continued to surge from 10GbEthernet to 800 GbE with 1.6TbE already on the roadmap. With the advent of artificial intelligence, GPU and accelerator chips have taken center stage as leading components in power consumption. Devices such as the Nvidia H100 GPU can draw up to 700 watts, while the Blackwell B200 is rated at up to 1200 watts. Next generation devices are anticipated to require more than 1500 watts each. AI data centers already in operation consist of tens of thousands of these chips, far exceeding conventional power demands. Adding the power required for storage, standard processors, and networking equipment along with the need for required cooling systems, the total power requirements of a facility can quickly reach 150 MW, comparable with a medium-sized city. The importance of power consumption at this level has caused the industry to begin rating the computing capacity of AI data centers in terms of megawatts.

Not only are individual components consuming more power, but the packaging density of AI computers has greatly increased. The extreme data rates of AI computing clusters require that connections within the system be as short as possible. The result is that thousands of heat-producing components are being tightly packed together in high-density server racks. Large AI data centers may be filled with server racks, each of which consume 15 to 20 kW of power.

Ensuring that chips that may cost upwards of $25,000 remain below their rated maximum operating temperature is forcing the industry to evolve from forced air to several variations of circulating or immersed liquid cooling technology. The additional pumps and chillers add to the total energy consumption of the data center.

The ability to efficiently distribute electrical power at these levels to hundreds of racks in a data center that may measure several acres in size has become a major challenge for designers. Some data centers have already reached the limit of locally available power, prompting consideration of spreading the power demand among multiple data centers linked by high-speed optical cables as a possible solution. Pressure to achieve both economic and ecological objectives magnify the problem. Fortunately, the connector industry offers solutions to address power distribution challenges from utility grade high-voltage interfaces to PCB level 0.75V to 1.5V-volt device loads.

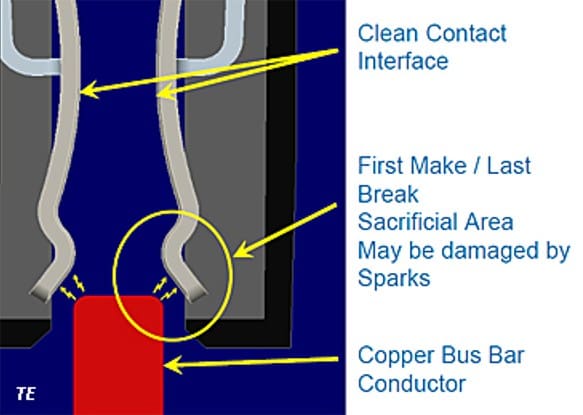

Power distribution connectors have been in continuous evolution for many years. Contact alloys as well as plating of power contacts have been upgraded to minimize bulk and contact resistance while contact geometry has been redesigned to increase reliability. Power connector contacts are often set to several different mating points to establish a make-first, break-last sequence to prevent contact damage from arcing during hot mating and disconnect.

Some power contact designs feature a specific sacrificial area at the front end of a power contact. The leading edge of the contact may be damaged at initial mating, but a clean final contact area assures low contact resistance when fully mated.

The vertical profile of connectors has been reduced to minimize obstruction of cooling airflow. In some designs, the housing has been redesigned to allow air to circulate through the connector body (venting) to increase the total current rating. Operator safety is an issue for power connectors and prompted introduction of “finger proof” designs. Demand for higher operating voltages has increased the availability of power connectors rated at 400+VDC. Modular power connectors feature the ability to mix and match combinations of low current signal contacts with high-current power contacts increasing packaging density and design flexibility. Increasing interest in immersion cooling has stimulated several connector manufacturers to modify the plastic bodies of PCB connectors to be compatible with liquid coolants and adjusted the impedance of the connector to compensate for the difference between contacts surrounded by air and those surrounded by liquids.

At power generation and distribution points, utility-grade power connectors that range from large, lugged terminals, splices, and taps to high-voltage switches minimize loss and arcing. They are typically found in traditional coal, natural gas, nuclear generating stations, and power substations as well as battery back-up systems. A new class of connectors has been developed specifically to address the unique environmental, mechanical, and electrical requirements of solar and wind power generation facilities.

Traditional data centers distribute 240- or 208-volts AC to the power shelf located in server racks. The voltage is converted to 48 volts DC and supplied to individual servers where it is stepped down to 1.5, 3.3, 5, and 12 VDC and distributed to individual devices. The power consumption of a single AI server can exceed 10 kW, with 6 to 8 servers in a rack. In an effort to reduce power consumption at the rack level, discussion has begun regarding the challenge of distributing 480 Vac and up to 800 Vac directly to the rack and possibly directly to the server. Connector manufacturers are beefing up their lines of high-voltage power connectors in anticipation of these changes.

At the rack and server levels, connectors play critical roles in ensuring reliable and efficient power distribution. Blind mate, hot-swap connectors enable quick field servicing, a critical requirement in high-performance AI computer clusters.

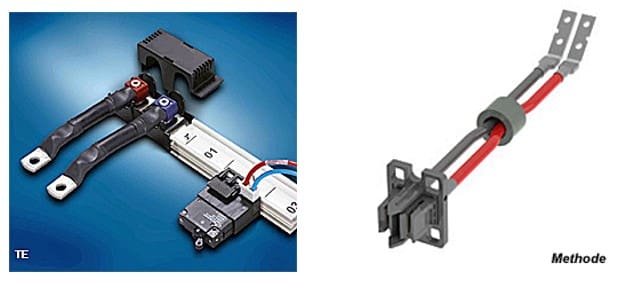

Distributing hundreds of Amps of power within the rack with minimal loss is often accomplished using either large conductor copper cables or busbars.

The Molex COEUR-enabled connector family features redundant contact beams to achieve high power density with multipole configurations for design flexibility.

Busbar interconnect design varies widely, including the ability to extract multiple voltages from one laminated busbar.

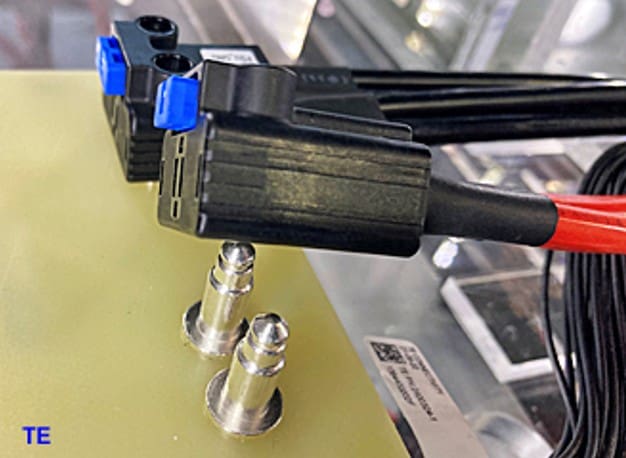

Blind-mating connectors located at the rear of each server mate with a vertical solid copper busbar. TE Connectivity recently showed a circulating water-cooled busbar to increase its current capacity of up to 750 kW in AI applications.

The Open Compute Project uses a combination of a 48V busbar to distribute power to each shelf and a high-current pin and socket connector to input AC voltage to the AC to DC power shelf.

Open Compute battery backup units plug into the battery backup shelf via a hot swappable blind-mate PwrBlade ULTRA HD connector that consists of four 30-amp power contacts and 30 signal contacts.

Anderson Power Pole connectors offer current ratings to 350A. They feature extensive design flexibility including durable, rugged housings that incorporate touch-proof safety features. Some versions are stackable to provide custom configurations as well as efficient use of limited space.

Applications that require wire-to-PCB, as well as board-to-board interconnects can be addressed by a host of power connectors from many domestic and global supplies.

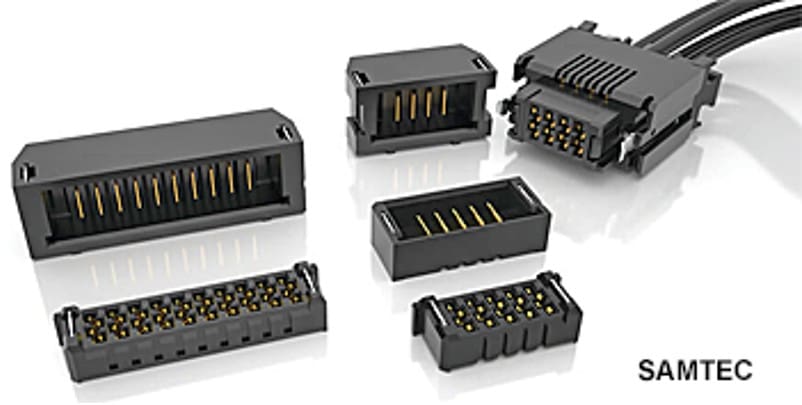

Samtec, for instance, offers the Micro Power System of power connectors, which include stacking, wire to PCB, and custom cable assemblies.

As demand for levels of power continue to increase, reliable high-current, low loss, and cost-effective interconnects will continue to play a critical role in the design of next generation computing equipment.

Like this article? Check out our other Artificial Intelligence and Data Centers articles, our Industrial Market Page, and our 2025 Article Archives.

Subscribe to our weekly e-newsletters, follow us on LinkedIn, Twitter, and Facebook, and check out our eBook archives for more applicable, expert-informed connectivity content.

- Agentic and Neuromorphic Computers Enable the Future of Digital Computing - May 26, 2026

- OFC 2026: High-Speed Networking in the AI Era - April 7, 2026

- DesignCon 2026 - March 17, 2026