Server Design is Getting Complicated

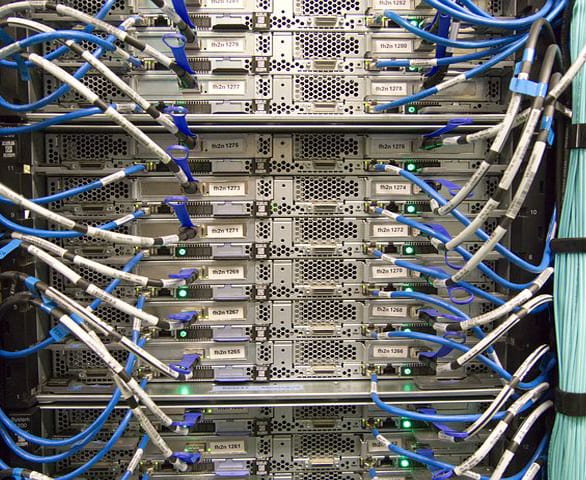

As data rates increase, designers are encountering performance limits, signal loss, and higher costs, and congested PCBs. High-speed internal cable assemblies offer a potential solution.

The increased complexity of enterprise computing equipment is one of the many factors that make today’s computing environment challenging. These complexity issues are further exacerbated by the performance limits designers are encountering in standard build materials as data rates increase.

The increased complexity of enterprise computing equipment is one of the many factors that make today’s computing environment challenging. These complexity issues are further exacerbated by the performance limits designers are encountering in standard build materials as data rates increase.

Increasing data rates require faster rise times, which incur more loss on high-speed signals. Higher-performance laminate materials can be used to compensate, but they come with a significant cost penalty, and the advantages may still not be enough. Newer processors and motherboards are handling more and more I/O, contributing to PCB trace and layout density. PCI Express (PCIe) channels have increased, and some processors now have as many as 40 bi-directional PCIe channels. In addition to PCIe, onboard storage applications may be trying to route high-speed SAS. The PCIe/SAS I/O signals can go much farther distances within a server chassis, incurring the associated losses. PCIe retimers are an option, but increase cost and complexity. Also, retimers do not provide an adequate solution for high-performance computing, since they increase the latency in the signal transmission.

These competing needs can add a lot of congestion to a PCB design. Increasing layer count can help with routing, but it also impacts cost and may decrease performance. Decreasing trace width is not a good option, as it further shortens signal reach at a given data rate. Compensating with wider traces increases the congestion as well as presents routing issues leading to and from fine-pitch components.

What is a system architect to do?

The last several years have seen an increase in the adoption of high-speed internal cable assemblies. Some are specified by industry standards, such as the ANSI T-10 SAS committee. SAS is currently capable of 12Gb/s and has components defined to support that. The SAS roadmap has 24Gb/s coming up. Others, such as PCI Express (PCIe), use non-specified assemblies, though those assemblies have often used SAS-type assemblies. PCIe 3.0 runs at 8GT/s, and the committee is working on specifications for internal assemblies in the version 4 of the standard, with a data rate of 16GT/s.

PCIe is used to connect the processor to SAS controllers or to other peripheral devices, such as General Purpose Graphic Processing Units (GPGPU). GPGPUs can use up to 16 lanes of PCIe (32 pairs, plus clock and grounds) and are frequently used in mission critical and/or high-performance applications. Today’s enterprise servers can incorporate several GPGPUs into a chassis. It is not practical to attach the GPGPUs with PCBs, due to the PCB losses and distances required for all of the system components.

Differential pair cable assemblies can bridge this gap, as well as provide more mechanical options for GPGPU and component placement. Placement is an important consideration, as the power consumption and heat output of systems increase with the processors and GPGPUs. Airflow is critical and must not be blocked. The challenge for cable assembly usage is to avoid airflow obstruction while providing the mechanical and connectivity requirements desired.

Thus it is important to consider the mechanical criteria around cable assemblies. Low profile, low loss, density, and flexibility are all desirable features. Some cable assemblies are cumbersome to route through a system, requiring generous bend radii that consume even more space. Other cable assemblies are low profile, but require elaborate folding. Many do not have full 360° flexibility, or they use solid primary signal conductor construction, which contributes to cable stiffness.

I-PEX Connectors’ CABLINE®-CA-II micro-coaxial wire-to-board connector has a 0.4mm pitch, a 1mm (±0.1mm) mated height, 360° EMI shielding, a multipoint ground design, and a physical locking cover that prevents accidental unmating. Designed for high-data-rate applications, including Thunderbolt™ 3, USB 3.0/3.1, PCIe 3.0/4.0, SAS 3.0, HDMI 2.0, MIPI, and eDP HBR 2/3, it delivers up to 20Gb/s per lane, mounts in the same PCB layout as the CABLINE-CA receptacle, and accepts a maximum of 38AWG for 90Ω differential impedance, a maximum of 40AWG for 100Ω differential impedance, and 34AWG discrete wires for power delivery.

I-PEX Connectors’ CABLINE®-CA-II micro-coaxial wire-to-board connector has a 0.4mm pitch, a 1mm (±0.1mm) mated height, 360° EMI shielding, a multipoint ground design, and a physical locking cover that prevents accidental unmating. Designed for high-data-rate applications, including Thunderbolt™ 3, USB 3.0/3.1, PCIe 3.0/4.0, SAS 3.0, HDMI 2.0, MIPI, and eDP HBR 2/3, it delivers up to 20Gb/s per lane, mounts in the same PCB layout as the CABLINE-CA receptacle, and accepts a maximum of 38AWG for 90Ω differential impedance, a maximum of 40AWG for 100Ω differential impedance, and 34AWG discrete wires for power delivery.

There is a long history of micro-coaxial assemblies being used in laptops and other devices, with speeds operating up to 20Gb/s in some USB-C implementations. Co-axial wire can be differentially driven with minimal impact on signal integrity results. Cable assemblies utilizing micro-coaxial wire are extremely flexible, as they are often made with stranded primary conductors. A major benefit to consider is the economies of scale that the mobile computing market provides, giving a favorable impact on the acquisition costs of the micro-coaxial assemblies.

The performance of micro-coaxial assemblies is enhanced by direct-to-contact termination. This eliminates the introduction of PCB losses as well as the additional terminations associated with PCBs. These extra sources of discontinuities and reflections can degrade signal integrity.

Cable can be constructed with the wires held in flat configurations, bundled configurations, or mixed. Available connectors provide extremely low profile terminations, allowing for fully shielded right-angle launches below 2mm high, vertical-shielded terminations with latching, and others.

Applications can be straightforward, high-speed jumpers similar to how assemblies are currently used, or below PCB, fitting in between a motherboard and the chassis wall. High-temperature cable versions are also available, addressing any temperature concerns that arise in given applications.

Visit I-PEX Connectors online.

Interested in a specific market? Click a market below for current articles and news.

Automotive, Consumer, Industrial, Medical, Mil/Aero, Datacom/Telecom, and Transportation